The Frontier AI Inference Cloud

Inference performance drives profitability.

Deploy frontier open-weight and custom AI models with unmatched efficiency—maximizing tokens and margins.

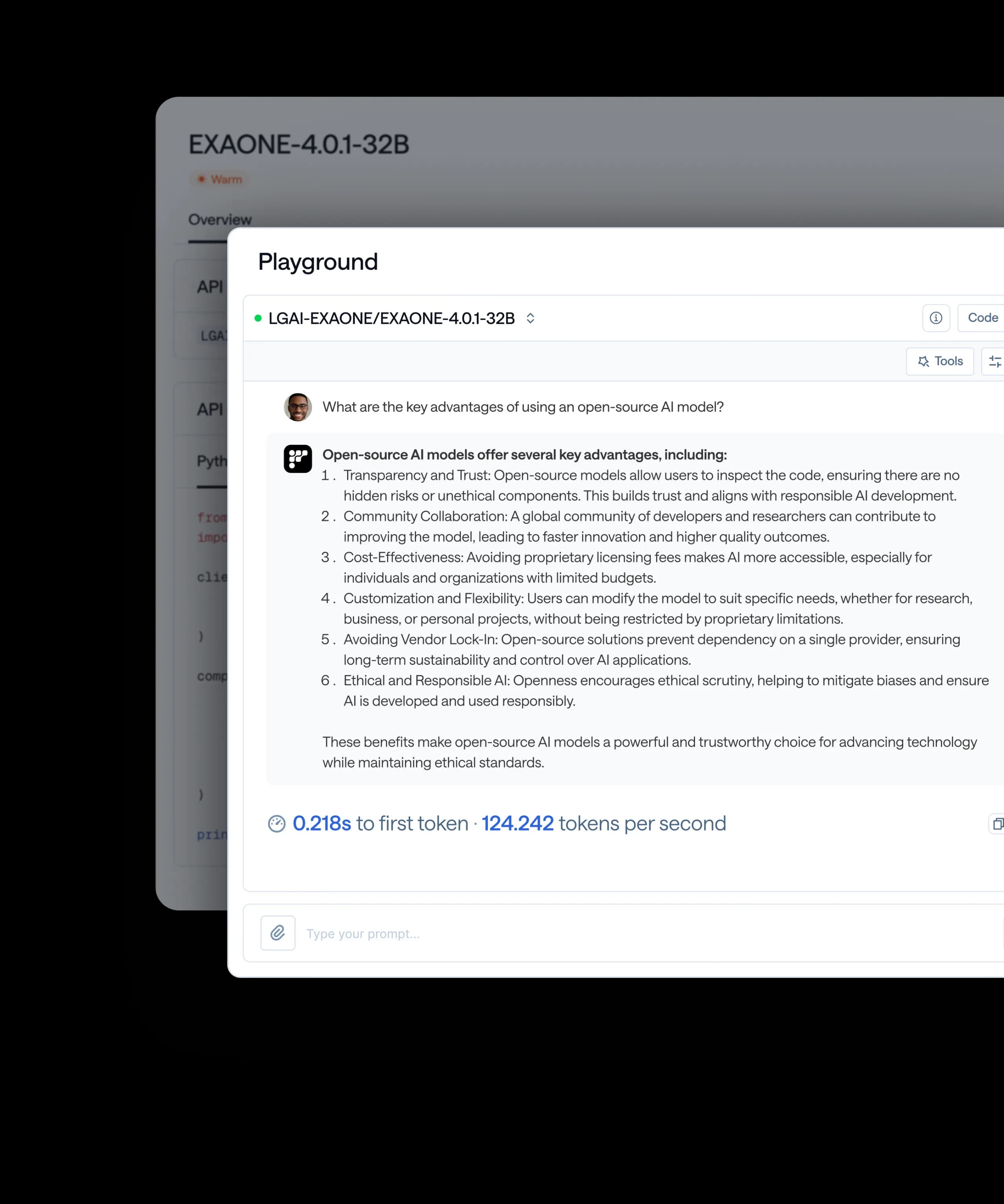

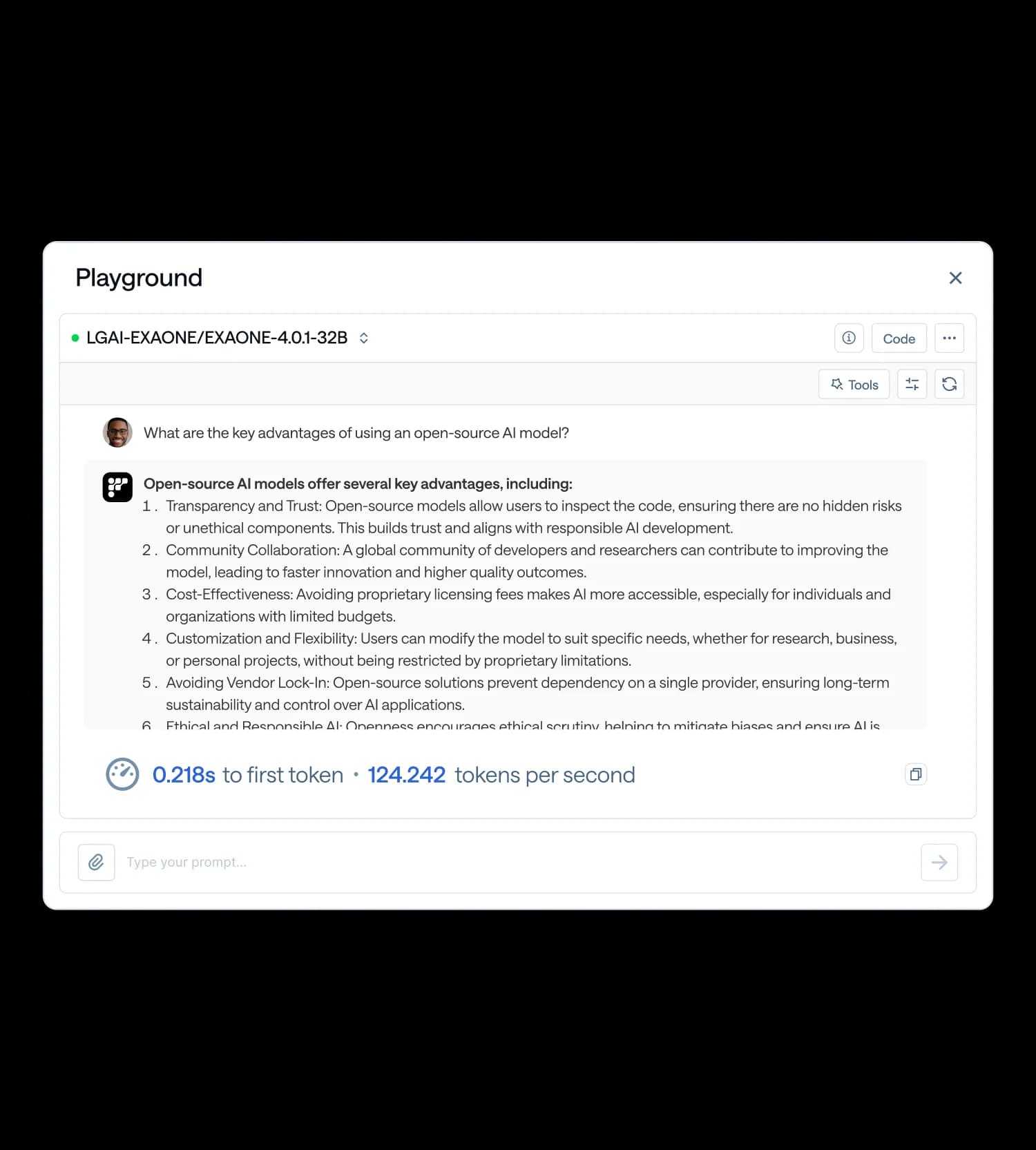

The fastest inference platform

Turn latency into your competitive advantage. Our purpose-built stack delivers 2×+ faster inference, combining model-level breakthroughs — custom GPU kernels, smart caching, continuous batching, speculative decoding, and parallel inference — with infrastructure-level optimizations like advanced caching and multi-cloud scaling. The result is unmatched throughput, ultra-low latency, and cost efficiency that scale seamlessly across abundant GPU resources.

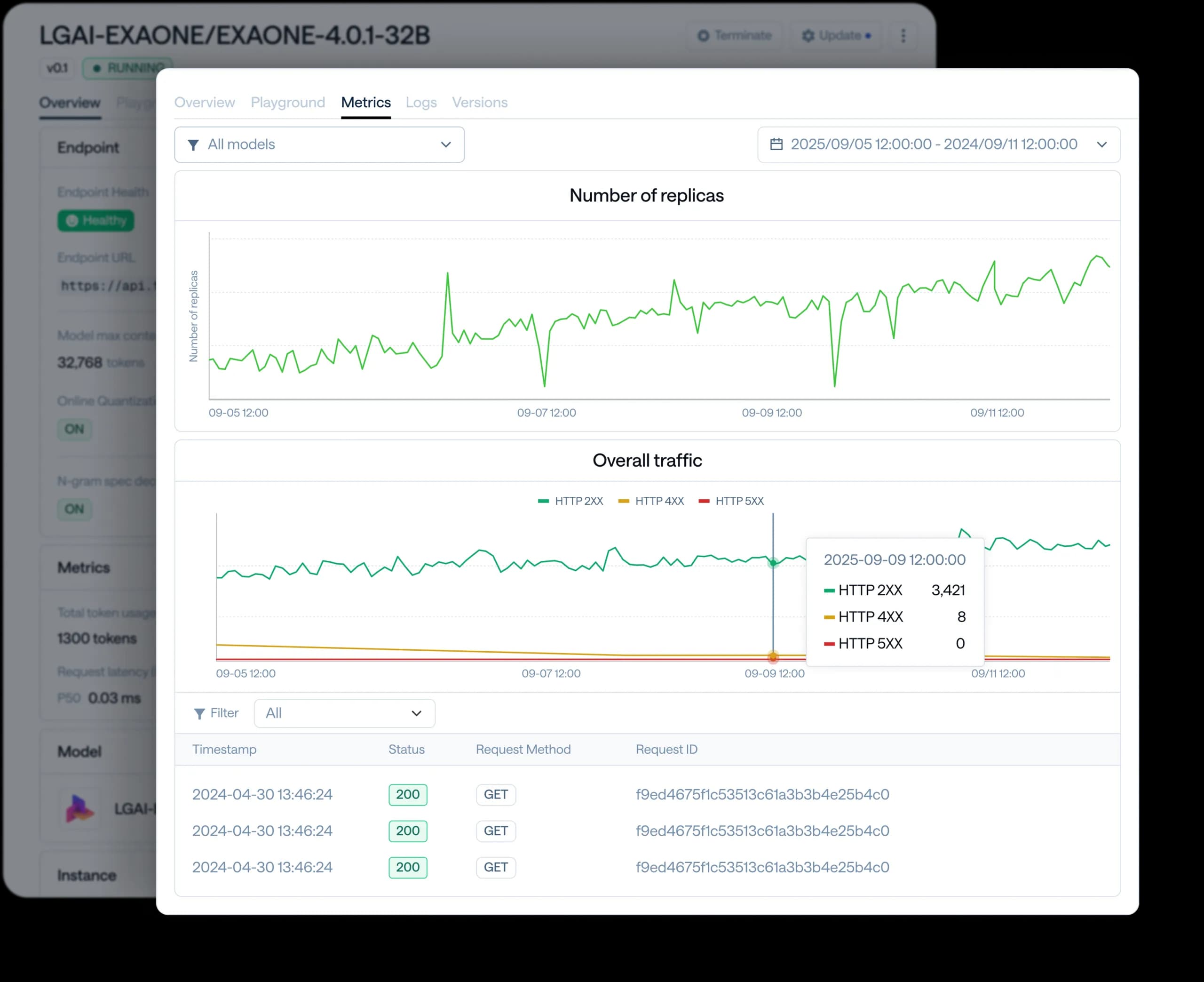

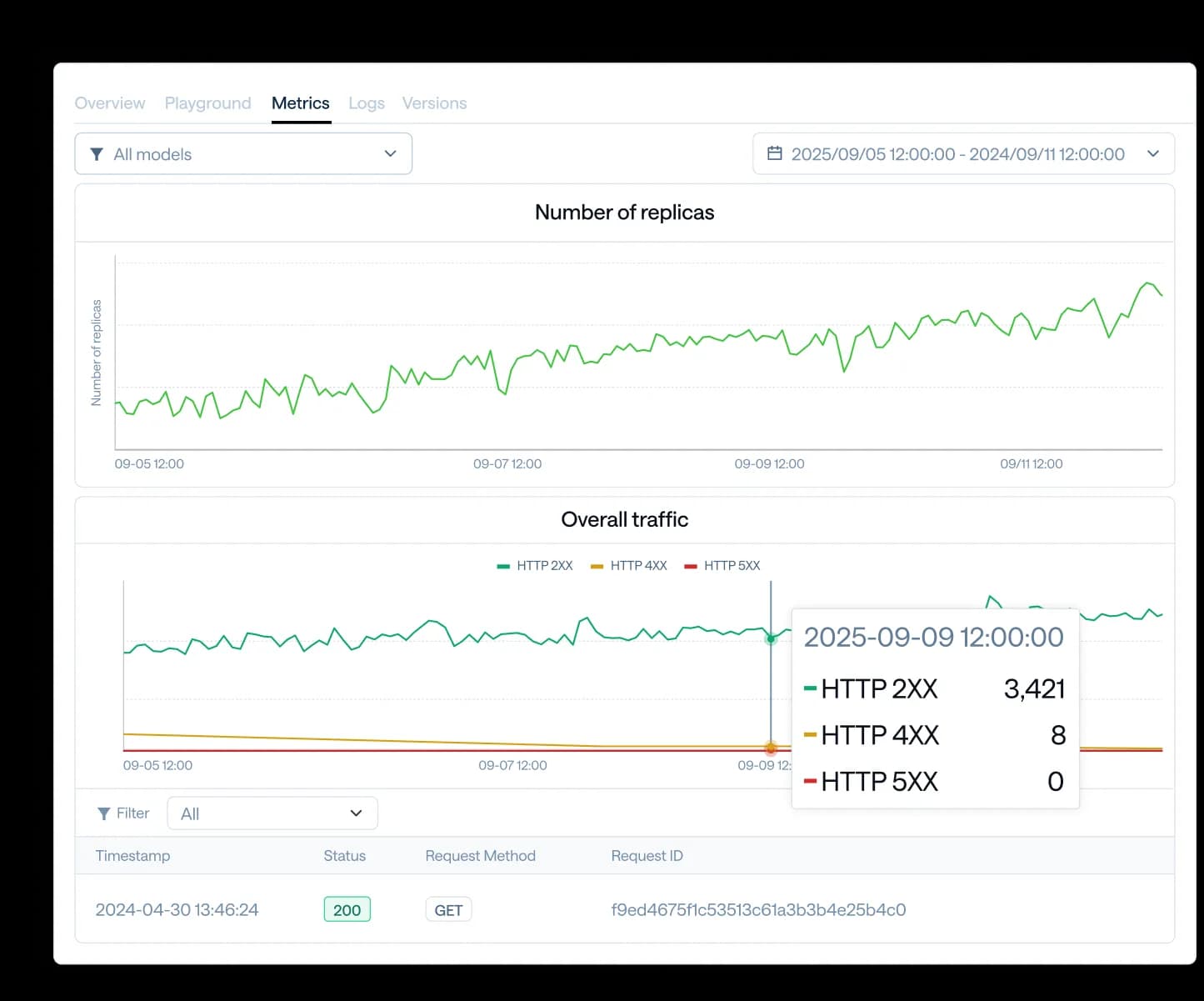

Guaranteed reliability, globally delivered

FriendliAI delivers 99.99% uptime SLAs with geo-distributed infrastructure and enterprise-grade fault tolerance. Your AI stays online and responsive through unpredictable traffic spikes and across global regions — scaling reliably with your business growth and backed by fleets of GPUs across regions. With built-in monitoring and compliance-ready architecture, you can trust FriendliAI to keep mission-critical workloads running wherever your users are.

570,000 models, ready to go

Instantly deploy any of 570,000 Hugging Face models — from language to audio to vision — with a single click. No setup or manual optimization required: FriendliAI takes care of deployment, scaling, and performance tuning for you. Need something custom? Bring your own fine-tuned or proprietary models, and we’ll help you deploy them just as seamlessly — with enterprise-grade reliability and control.

How teams scale with FriendliAI

Learn how leading companies achieve unmatched performance, scalability, and reliability with FriendliAI

Our custom model API went live in about a day with enterprise-grade monitoring built in.

Rock-solid reliability with ultra-low tail latency.

Scale to trillions of tokens with 50% fewer GPUs, thanks to FriendliAI.

Fluctuating traffic is no longer a concern because autoscaling just works.

Friendli Engine is an irreplaceable solution for generative AI serving.

Our custom model API went live in about a day with enterprise-grade monitoring built in.

Rock-solid reliability with ultra-low tail latency.

Scale to trillions of tokens with 50% fewer GPUs, thanks to FriendliAI.

Fluctuating traffic is no longer a concern because autoscaling just works.

Friendli Engine is an irreplaceable solution for generative AI serving.

Latest from FriendliAI

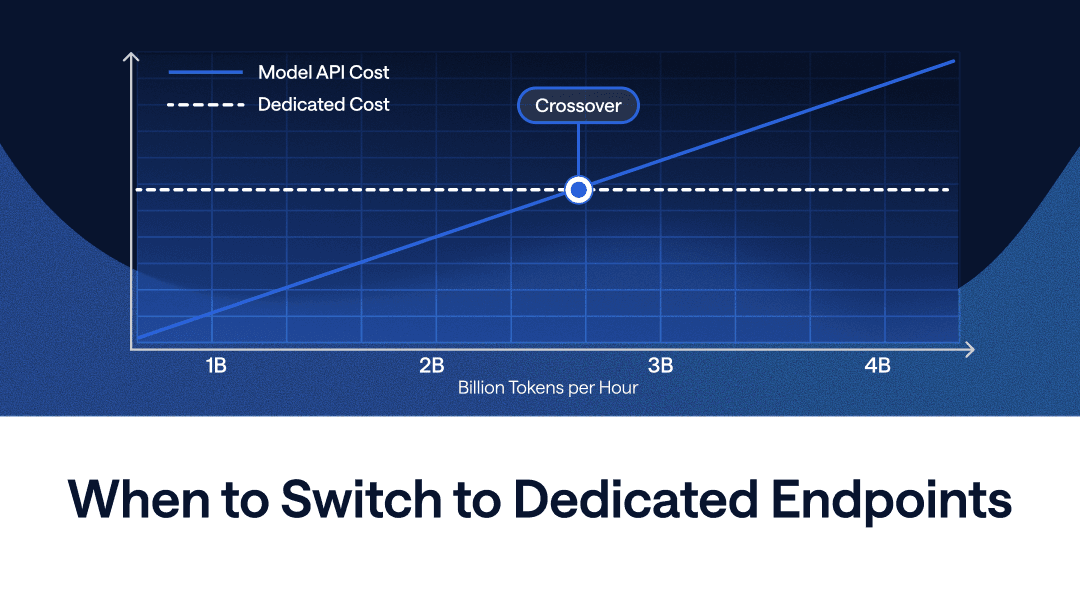

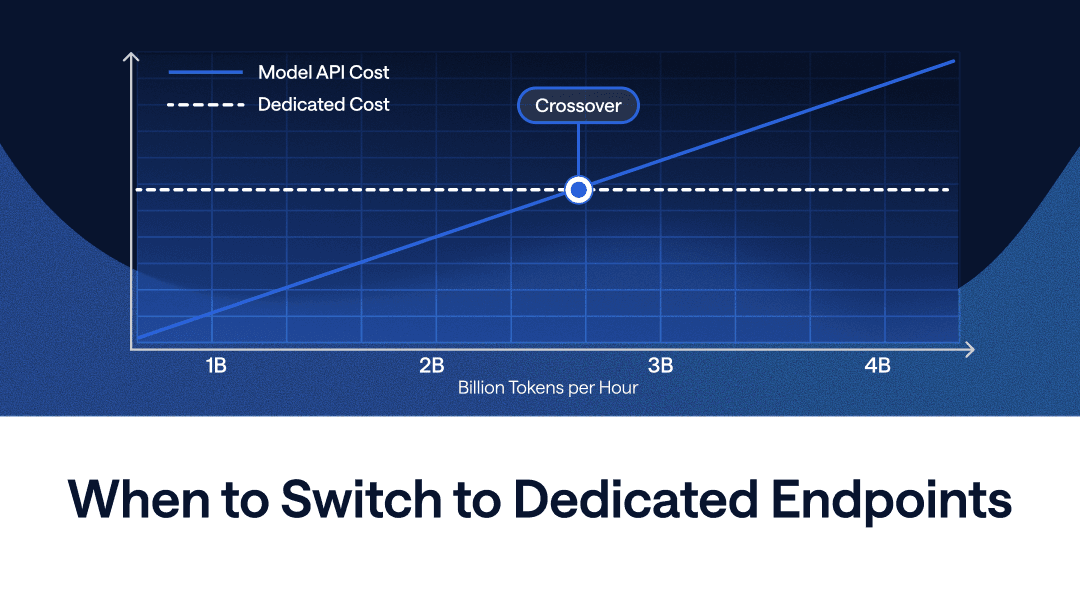

At What Scale Do Dedicated Endpoints Make Sense?

Run NVIDIA's Most Powerful Open Reasoning Model on Day 0 — Nemotron 3 Ultra on FriendliAI

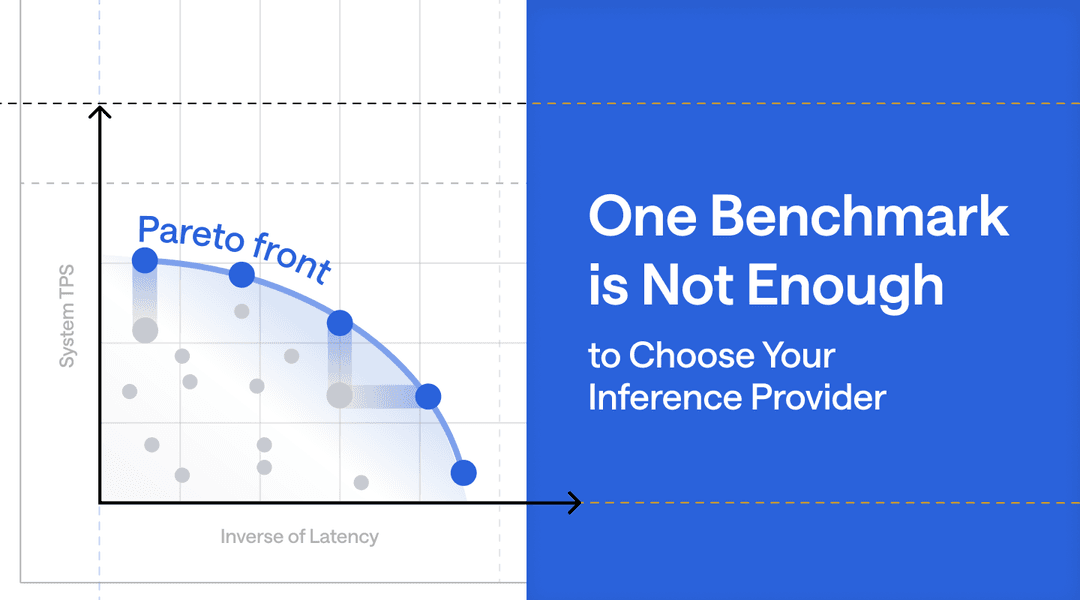

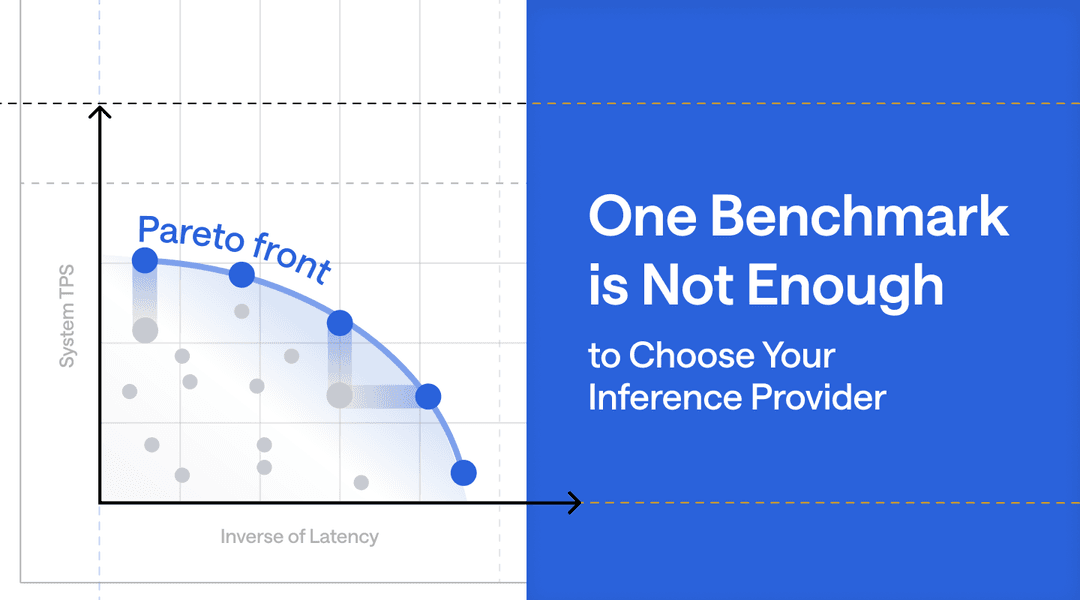

One Benchmark Is Not Enough to Choose Your Inference Provider

Kimi K2.6 Meets FriendliAI: Frontier Agentic AI, Deployed in One Click

Accelerating Inference on Friendli Dedicated Endpoints with Draft-Model Speculative Decoding

What's So Special About DeepSeek V4? Find Out On FriendliAI

FriendliAI Expands to San Francisco to Scale Frontier AI Inference for Open-Weight and Custom Models

Gemma-4-31B-it API on FriendliAI: #1 Output Speed & Response Time

Scale Beyond GPU Memory Limits with Host KV Cache for Dedicated Endpoints

NVIDIA Nemotron™ 3 Nano Omni, Day-0 on FriendliAI: Unified Multimodal Reasoning, at Peak Performance

Vulnerability Discovery with Open-Weight GLM-5: Frontier Quality at 1/7 the Cost of Closed Models

GLM-5.1 on FriendliAI: The Long-Horizon Agentic Engineering Model at Peak Performance

FriendliAI Now Supports Anthropic Messages API

FriendliAI and Samsung Cloud Platform Forge Strategic Alliance to Power Frontier Model AI Inference on NVIDIA B300 GPUs

FriendliAI Appoints Brian Yoo, Former Moloco COO, as Chief Business Officer to Drive Next Phase of Hypergrowth

Running OpenClaw with NemoClaw and FriendliAI

Automating Industrial Inspection with Vision Language Models

FriendliAI Achieves SOC 2 Type II and HIPAA Compliance

Integrating FriendliAI with OpenClaw

Your Coding Agent is Only as Fast as Your Model API

At What Scale Do Dedicated Endpoints Make Sense?

Run NVIDIA's Most Powerful Open Reasoning Model on Day 0 — Nemotron 3 Ultra on FriendliAI

One Benchmark Is Not Enough to Choose Your Inference Provider

Kimi K2.6 Meets FriendliAI: Frontier Agentic AI, Deployed in One Click

Accelerating Inference on Friendli Dedicated Endpoints with Draft-Model Speculative Decoding

What's So Special About DeepSeek V4? Find Out On FriendliAI

FriendliAI Expands to San Francisco to Scale Frontier AI Inference for Open-Weight and Custom Models

Gemma-4-31B-it API on FriendliAI: #1 Output Speed & Response Time

Scale Beyond GPU Memory Limits with Host KV Cache for Dedicated Endpoints

NVIDIA Nemotron™ 3 Nano Omni, Day-0 on FriendliAI: Unified Multimodal Reasoning, at Peak Performance

Vulnerability Discovery with Open-Weight GLM-5: Frontier Quality at 1/7 the Cost of Closed Models

GLM-5.1 on FriendliAI: The Long-Horizon Agentic Engineering Model at Peak Performance

FriendliAI Now Supports Anthropic Messages API

FriendliAI and Samsung Cloud Platform Forge Strategic Alliance to Power Frontier Model AI Inference on NVIDIA B300 GPUs

FriendliAI Appoints Brian Yoo, Former Moloco COO, as Chief Business Officer to Drive Next Phase of Hypergrowth

Running OpenClaw with NemoClaw and FriendliAI

Automating Industrial Inspection with Vision Language Models

FriendliAI Achieves SOC 2 Type II and HIPAA Compliance

Integrating FriendliAI with OpenClaw

Your Coding Agent is Only as Fast as Your Model API