- May 19, 2026

- 5 min read

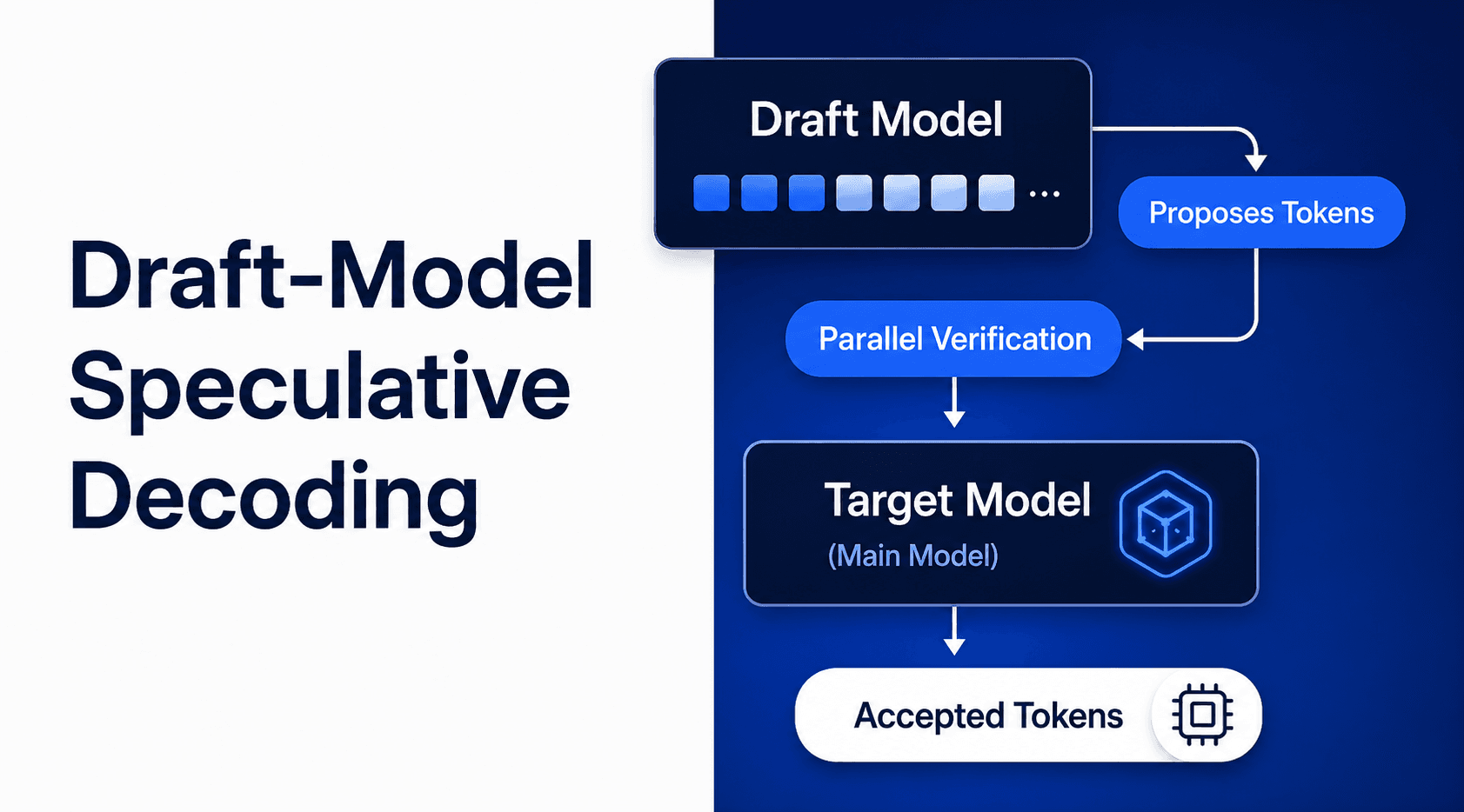

Accelerating Inference on Friendli Dedicated Endpoints with Draft-Model Speculative Decoding

Read full article

- June 6, 2026

- 5 min read

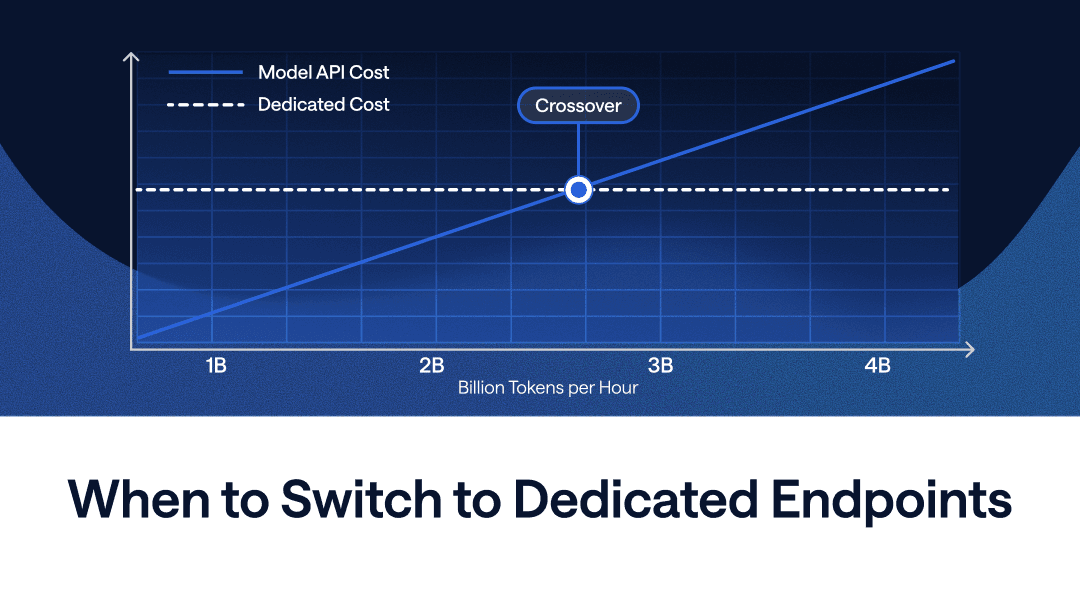

At What Scale Do Dedicated Endpoints Make Sense?

Crossover

Workload

Inference

- June 4, 2026

- 4 min read

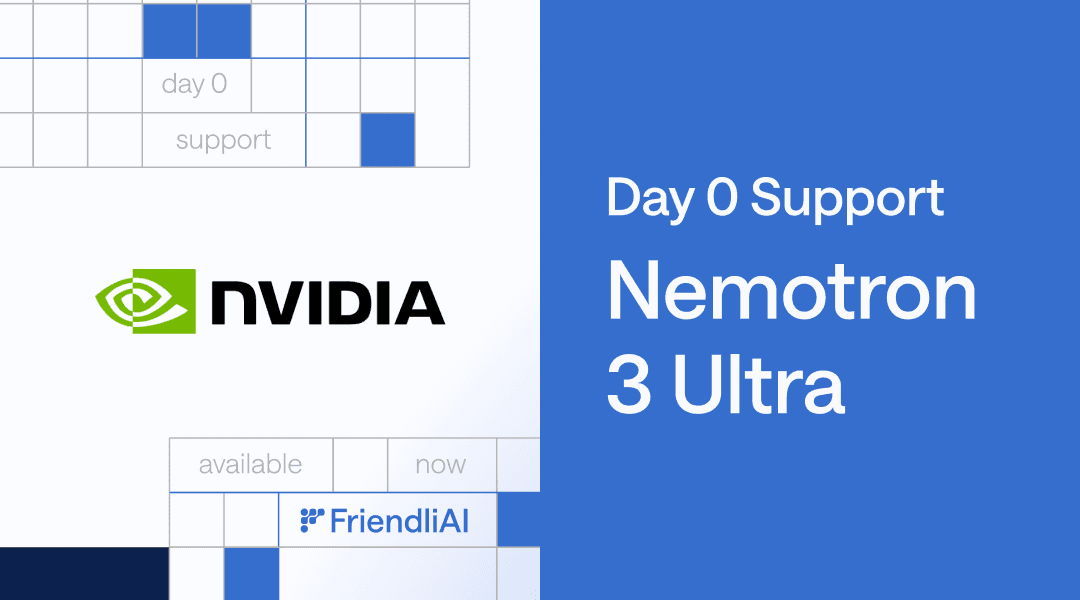

Run NVIDIA's Most Powerful Open Reasoning Model on Day 0 — Nemotron 3 Ultra on FriendliAI

NVIDIA

Nemotron

Agentic AI

- June 4, 2026

- 4 min read

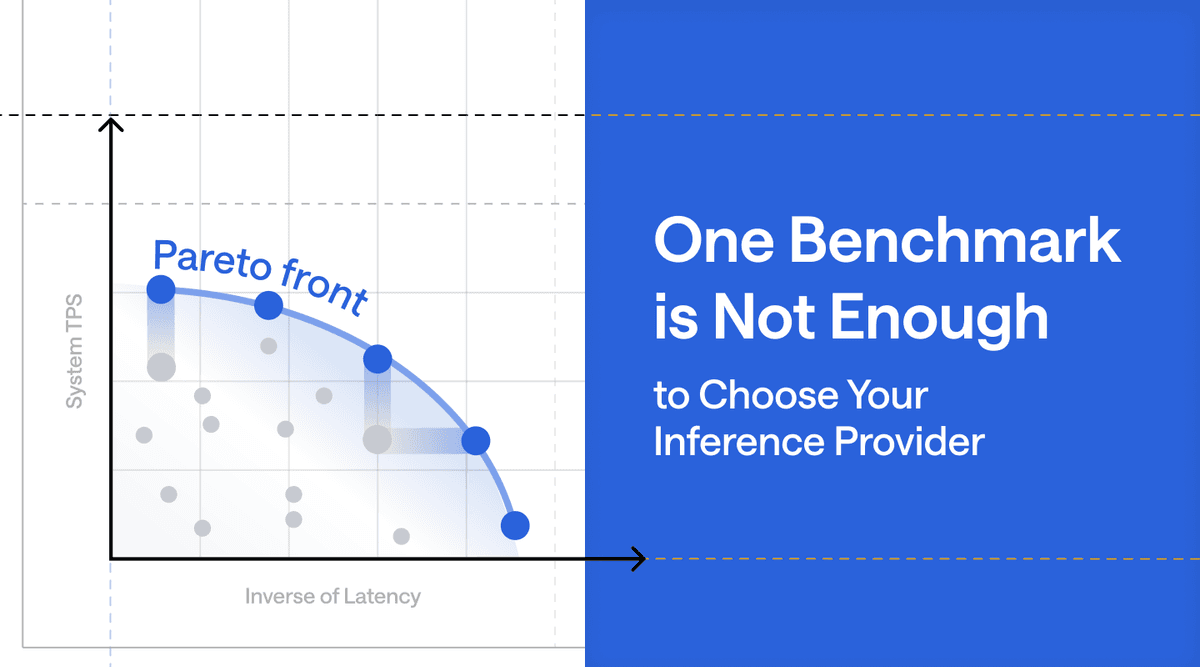

One Benchmark Is Not Enough to Choose Your Inference Provider

LLM Inference

Inference Benchmarking

Performance Optimization

- May 28, 2026

- 5 min read

Kimi K2.6 Meets FriendliAI: Frontier Agentic AI, Deployed in One Click

Moonshot AI

Inference

Dedicated Endpoints

- May 15, 2026

- 5 min read

What's So Special About DeepSeek V4? Find Out On FriendliAI

DeepSeek-V4

Inference

Dedicated Endpoints

- May 11, 2026

- 3 min read

FriendliAI Expands to San Francisco to Scale Frontier AI Inference for Open-Weight and Custom Models

Expansion

Growth

Scale

- May 7, 2026

- 5 min read

Gemma-4-31B-it API on FriendliAI: #1 Output Speed & Response Time

Gemma

Inference

Model APIs

- April 29, 2026

- 4 min read

Scale Beyond GPU Memory Limits with Host KV Cache for Dedicated Endpoints

KV Cache

Dedicated Endpoints

Long-Context Inference

- April 29, 2026

- 5 min read

NVIDIA Nemotron™ 3 Nano Omni, Day-0 on FriendliAI: Unified Multimodal Reasoning, at Peak Performance

NVIDIA

Nemotron