Documentation Index

Fetch the complete documentation index at: https://friendli.ai/docs/llms.txt

Use this file to discover all available pages before exploring further.

Overview

Friendli Dedicated Endpoints let you run custom or open-source generative AI models on dedicated GPU hardware, powered by Friendli’s serving engine — without sharing resources or managing infrastructure. You can bring your own model or choose from Hugging Face and Weights & Biases, select the right GPU for your workload, and start serving in minutes. This quickstart walks you through launching your first dedicated endpoint.1. Log in or sign up

- If you have an account, log in using your preferred SSO or email/password combination.

- If you’re new to FriendliAI, create an account for free.

2. Access Friendli Dedicated Endpoints

- On your left sidebar, find the Dedicated Endpoints menu.

- Click the option to access the endpoint list page.

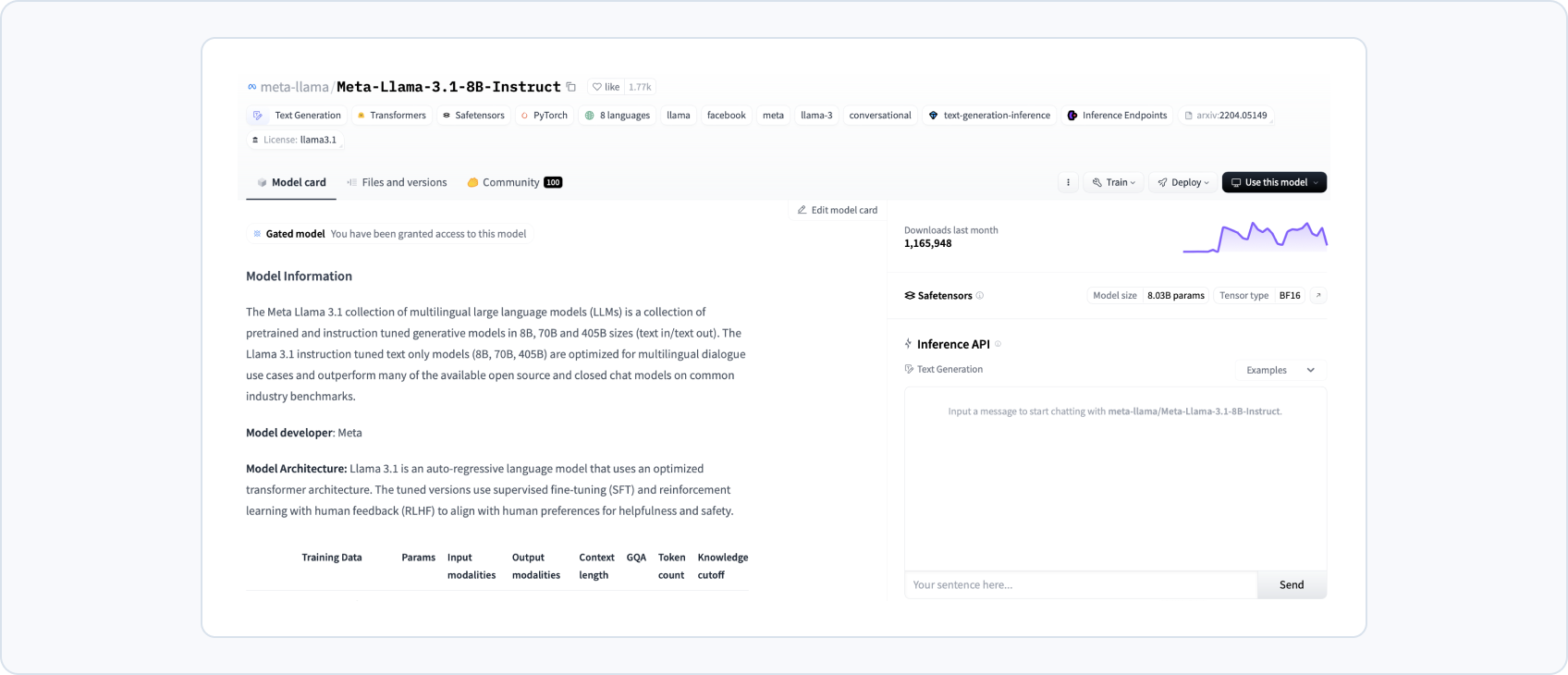

3. Prepare your model

- Choose a model that you wish to serve from Hugging Face, Weights & Biases, or upload your custom model on our cloud.

4. Deploy your endpoint

- Deploy your endpoint, using the model of your choice prepared from step 3, and the instance equipped with your desired GPU specification.

- You can also configure your replicas and the max-batch-size for your endpoint.

5. Generate responses

- You can generate your responses in two ways: playground and endpoint URL.

- Try out and test generating responses on your custom model using a chatGPT-like interface at the playground tab.

- For general usage, send queries to your model through our API at the given endpoint address, accessible on the endpoint information tab.

Generating responses through the endpoint URL

Refer to this guide for general instructions on Personal API Key.For a more detailed tutorial for your usage, please refer to our tutorial for using Hugging Face models and W&B models.