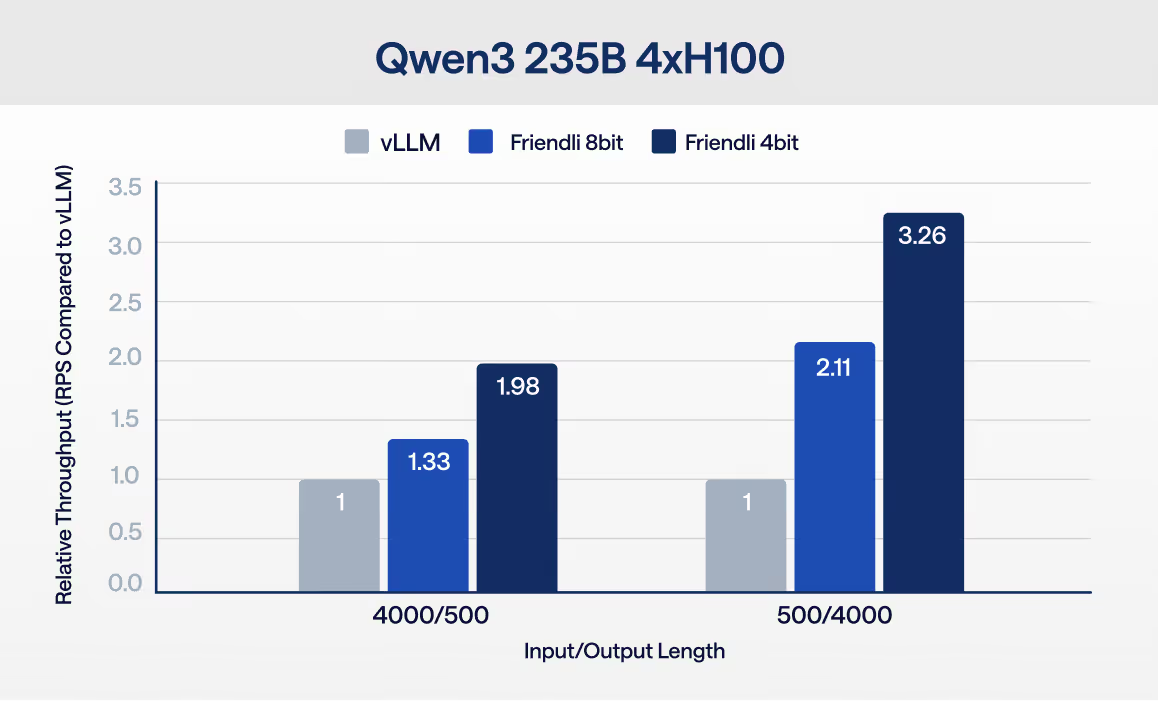

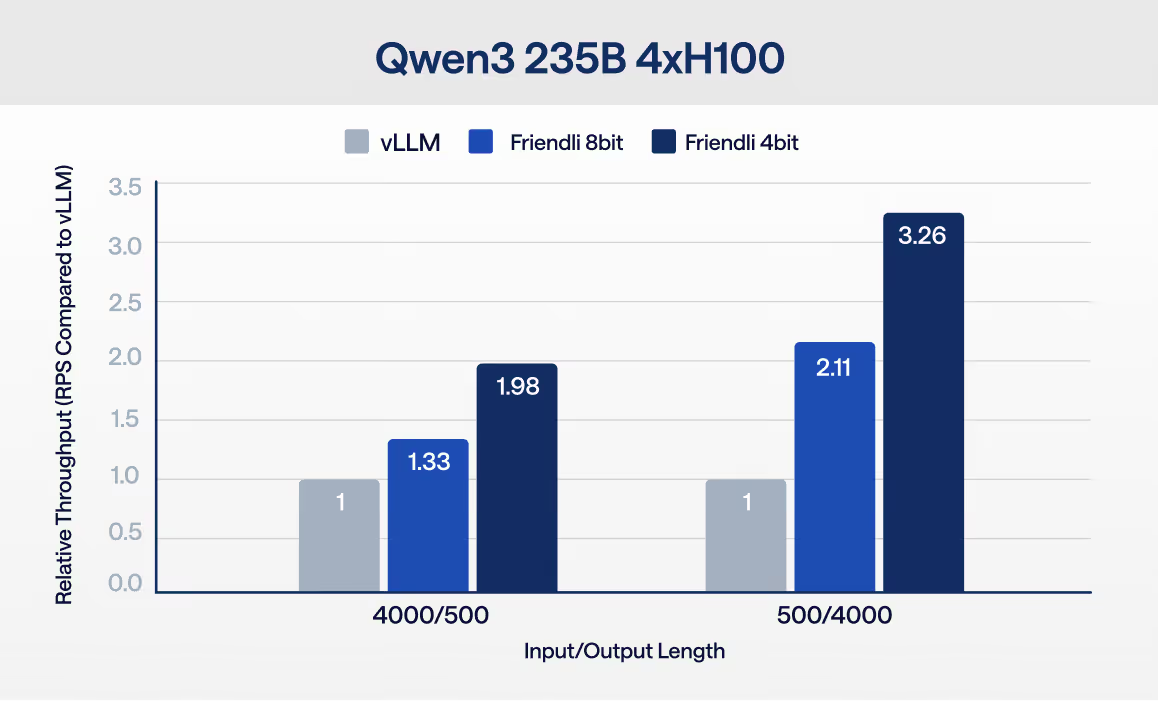

Whether you're running open-source models on another inference platform or relying on closed APIs like OpenAI, switching is simple. Get 99.99% reliability, 3× higher throughput, and up to 90% lower costs—with minimal changes to your stack.

Many teams already run open-source models—then hit bottlenecks as traffic grows: latency variance, throughput ceilings, and scaling overhead. FriendliAI is an inference platform that helps teams switch to open models with lower latency, higher throughput, and up to 90% lower inference costs without changing their application.

.png)

First

Submit the form with your details and current provider bill

Second

We review and approve your credit amount

Third

Start running inference on FriendliAI using your credits