LG AI Research powers production deployment of K-EXAONE with FriendliAI

Overview

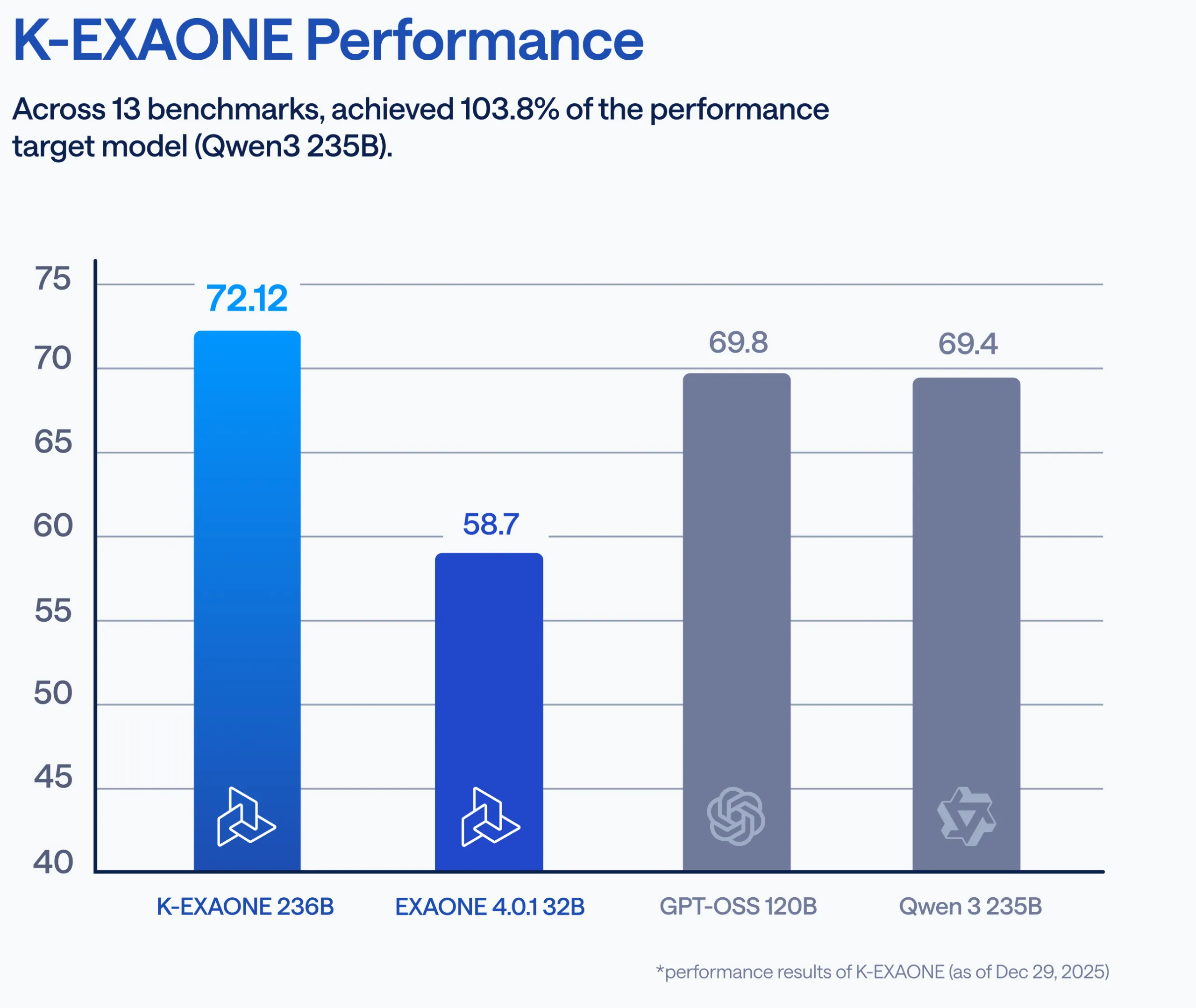

LG AI Research is a leading AI research organization developing large-scale foundation models, including K-EXAONE, a multilingual, Mixture-of-Experts model designed for enterprise and public-sector workloads.

Moving K-EXAONE from research into real-world deployment meant finding an inference platform that could hold up under production demands, one that was fast, cost-efficient, and flexible enough to fit how internal teams actually work.

They chose FriendliAI as their production inference layer.

About K-EXAONE

K-EXAONE is LG AI Research's sovereign foundation model, designed for enterprise-grade applications. Built on a Mixture-of-Experts architecture with Hybrid Attention, it delivers compute-efficient inference while keeping memory bottlenecks in check. The model supports six languages — Korean, English, Spanish, German, Japanese, and Vietnamese — with context lengths of up to approximately 260K tokens, multi-token prediction for faster response generation, and improved token efficiency over previous EXAONE versions.

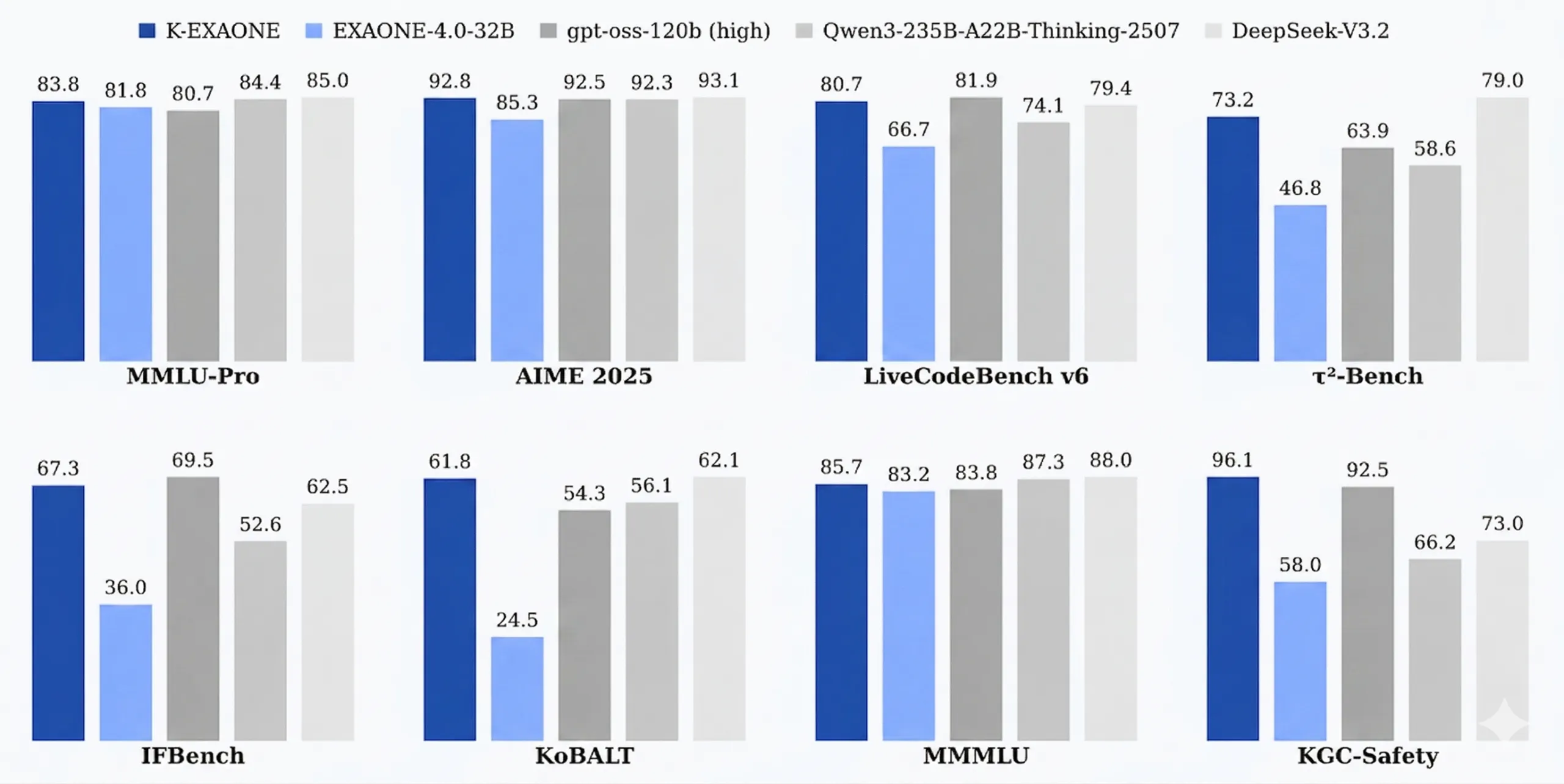

K-EXAONE ranks among the top open-weight models globally across major benchmarks, including reasoning, coding, multilingual understanding, and safety.

Challenges

For LG AI Research, the real challenge began when moving K-EXAONE into production environments. Their team faced:

High GPU cost when serving large MoE models with baseline inference stacks

Memory bottlenecks caused by long-context workloads

Difficulty scaling inference reliably during peak usage

Limited internal resources to build and maintain optimized serving pipelines

Like many research organizations, LG AI Research wanted their engineers focused on model innovation, not infrastructure engineering.

Why FriendliAI

LG AI Research selected FriendliAI for its production-ready inference engine, optimized specifically for large foundation models.

The decision came down to a combination of performance and practicality.

FriendliAI's engine is built to handle the demands of MoE architectures, with advanced memory optimization that keeps long-context workloads running smoothly. Its support for both Dedicated Endpoints and Model APIs gave LG AI Research the deployment flexibility they needed, while stable performance and predictable costs removed the uncertainty that often comes with production scaling. Perhaps most importantly, FriendliAI's turnkey serving stack meant internal teams could move fast without building custom infrastructure from scratch. The result was a clean, direct path from research model to production endpoint.

The Solution

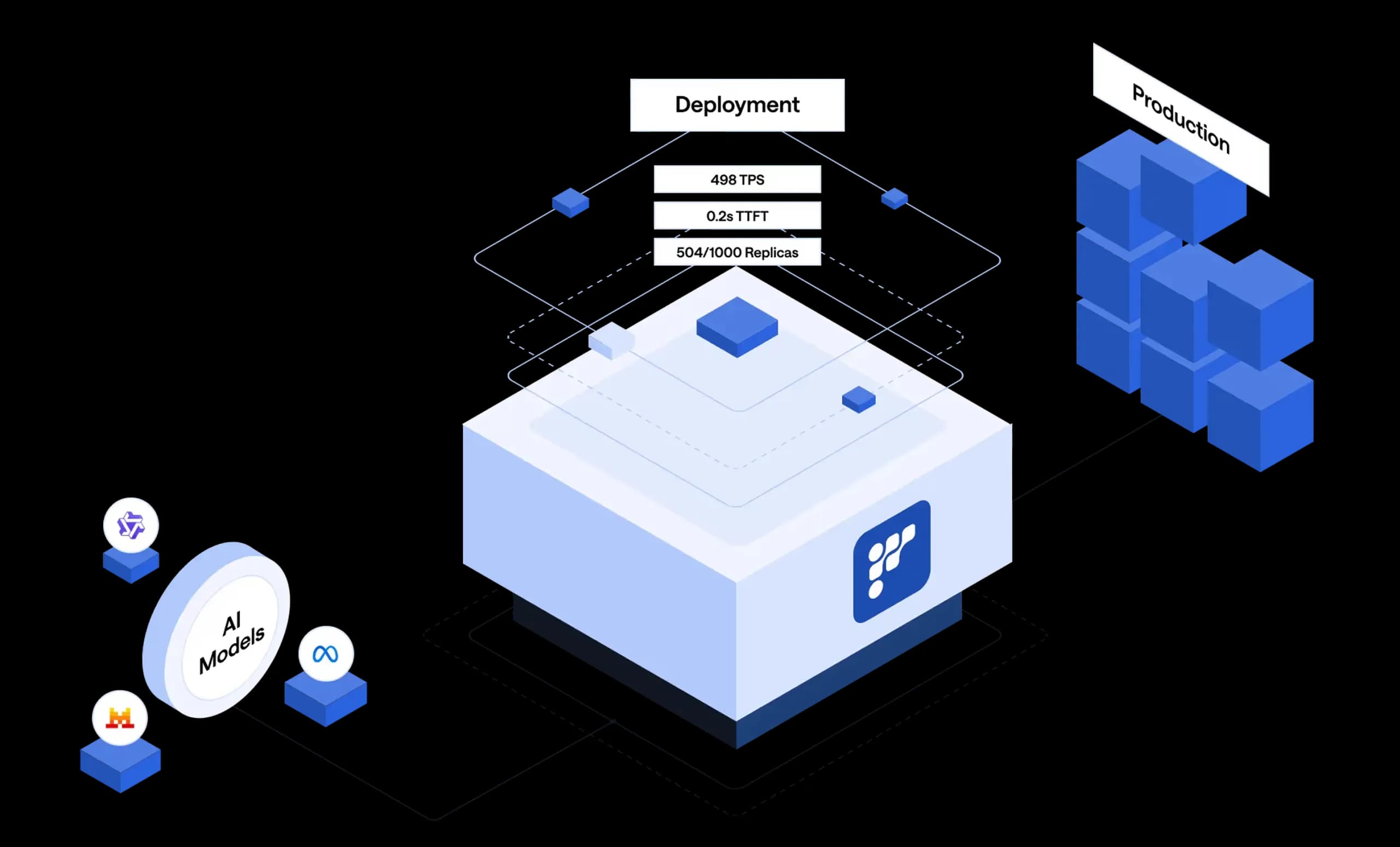

LG AI Research deployed K-EXAONE on FriendliAI using a combination of Dedicated Endpoints and Model APIs, giving them the flexibility to match infrastructure to workload demand across different use cases.

At the core of the deployment, FriendliAI's inference kernels were purpose-built to handle the specific architectural demands of MoE and hybrid attention models, the kind of complexity that generic serving stacks often struggle with. Automatic batching and continuous GPU utilization optimization ensured that compute resources were used efficiently, keeping performance stable even as request volumes fluctuated.

On the operational side, FriendliAI delivered production-grade endpoints with consistent, predictable latency, a critical requirement for any model moving from research into live applications. Rather than spending engineering cycles building and maintaining custom infrastructure, LG AI Research was able to onboard K-EXAONE quickly and focus their efforts where it mattered most: the model itself.

Beyond this initial deployment, the workflow FriendliAI provided was designed to be repeatable. Each step from model onboarding to endpoint configuration was structured in a way that could be carried forward to future models without starting from scratch. For a research organization constantly pushing new checkpoints toward production, that kind of consistency is as valuable as raw performance.

With FriendliAI as the serving layer, K-EXAONE made the full journey from research checkpoint to production-ready API, reliably, efficiently, and at scale.

Results

By deploying on FriendliAI, LG AI Research saw an immediate and measurable increase in traffic threefold and achieved successful production deployment of K-EXAONE across multiple environments.

Deployment speed improved dramatically with Friendli Endpoints, LG AI Research reduced model deployment time by several weeks while achieving maximum inference performance. Significantly faster time-to-first-token compared to baseline setups, combined with improved throughput through optimized inference pipelines, made for a noticeably more responsive model in production.

Reduced GPU overhead via efficient resource utilization kept costs predictable as usage grew, and FriendliAI's elastic scaling meant the team could handle demand spikes without over-provisioning capacity. Most importantly, the partnership enabled internal teams to focus on model development instead of serving infrastructure keeping research momentum high as K-EXAONE scaled.

“Both our 32B EXAONE and 236B K-EXAONE models run extremely fast on FriendliAI's platform, with customers expressing high satisfaction with the performance. FriendliAI's expert support has shortened testing and evaluation by several weeks, helping customers adopt and scale the full EXAONE model family faster than ever.”

Woohyung Lim, Head of LG AI Research

Deploy Your Models with FriendliAI

FriendliAI helps enterprise teams and AI organizations turn foundation models into reliable production systems — with optimized inference, flexible deployment options, and the reliability that enterprise workloads demand.

Serve high-performance inference with FriendliAI.

Start Building Faster

Related Customer Stories

Learn how leading companies achieve unmatched performance, scalability, and reliability with FriendliAI

Rock-solid reliability with ultra-low tail latency.

Scale to trillions of tokens with 50% fewer GPUs, thanks to FriendliAI.

Fluctuating traffic is no longer a concern because autoscaling just works.

Friendli Engine is an irreplaceable solution for generative AI serving.

Rock-solid reliability with ultra-low tail latency.

Scale to trillions of tokens with 50% fewer GPUs, thanks to FriendliAI.

Fluctuating traffic is no longer a concern because autoscaling just works.

Friendli Engine is an irreplaceable solution for generative AI serving.