Upstage scales AI-powered article proofreading with FriendliAI

Overview

Upstage is an AI company providing an article proofreading service powered by large language models. The service is currently live with Chosun Biz, one of Korea's major business news outlets, with plans to onboard Chosun Ilbo, one of the country's largest daily newspapers, as the next customer.

As Upstage's proofreading service grows in scope and customer count, they needed an inference platform that could handle bursty, time-sensitive traffic patterns efficiently, consolidate workloads from multiple vendors, and scale cost-effectively without over-provisioning GPU resources.

They chose FriendliAI Dedicated Endpoints as their production inference layer.

Challenges

Upstage's article proofreading service operates under a unique traffic pattern: submissions are concentrated in short windows — typically once or twice a day — creating sharp peaks followed by extended periods of low utilization. This made efficient infrastructure sizing a persistent challenge.

Their team faced:

- Bursty, time-sensitive traffic tied to editorial submission windows, requiring reliable burst capacity without constant over-provisioning

- GPU underutilization during off-peak hours, increasing cost per inference without a flexible scaling mechanism

- Workload fragmentation across multiple vendors, including an existing deployment on Fireworks that they wanted to consolidate onto a single, more efficient platform

- Performance pressure to improve tokens per second (TPS) to keep pace with the real-time demands of their online proofreading service

- Growth on the horizon, with the addition of Chosun Ilbo requiring a platform that could scale reliably to serve a larger customer base

Why FriendliAI

Upstage selected FriendliAI Dedicated Endpoints for their ability to handle variable, peak-driven traffic with built-in autoscaling and fault recovery without requiring Upstage to manage infrastructure complexity themselves.

Dedicated Endpoints provided reliable, isolated inference capacity for production workloads, with autoscaling that absorbed peak traffic during article submission windows without any manual intervention. Automatic fault recovery ensured continuity during unexpected surges or failures, keeping the service stable when it mattered most.

On the efficiency side, FriendliAI confirmed that the current A100 deployment carries significant headroom capable of handling double the current traffic load — giving Upstage confidence in the platform's ability to grow with demand. That growth path is further supported by clear upgrade flexibility, with a straightforward route to H100 or H200 GPUs to improve TPS as usage scales.

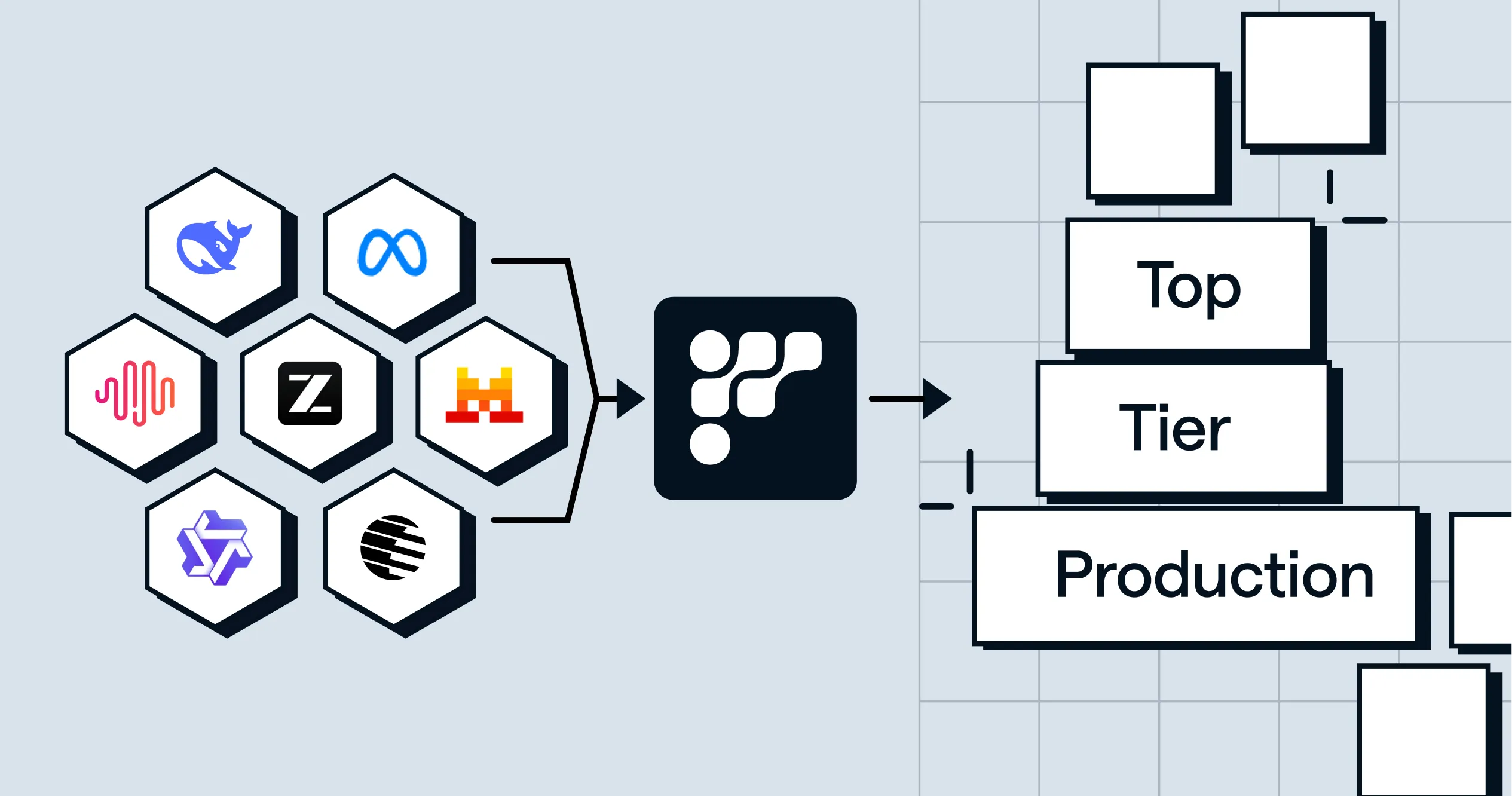

Beyond performance, FriendliAI also offered a path to consolidation, enabling Upstage to migrate existing Fireworks workloads onto a single, unified inference platform and reduce the operational overhead of managing multiple serving environments.

The Solution

Upstage deployed their proofreading and embedding workloads on FriendliAI using Dedicated Endpoints, with autoscaling configured to manage the traffic variability that comes with peak editorial hours, a pattern driven largely by article submission windows where demand can spike unpredictably.

At the core of the deployment, dedicated A100 GPU inference provided the consistent, isolated capacity needed for reliable production model serving. Autoscaling was configured to expand up to two GPUs during testing and peak traffic phases, ensuring that performance held steady without requiring manual intervention when load increased. Automatic fault recovery added another layer of resilience, maintaining service continuity in the event of unexpected surges or failures and keeping the platform dependable under real-world conditions.

Looking ahead, FriendliAI also provided a clear path for Upstage to consolidate workloads currently running on Fireworks onto a single, unified inference platform, reducing the operational complexity of managing multiple serving environments. And as demand continues to grow, a well-defined upgrade trajectory to H100 and H200 GPUs gives Upstage the confidence that the infrastructure can scale to meet future TPS requirements without a disruptive platform change.

Results

FriendliAI Dedicated Endpoints delivered the reliability and flexibility Upstage needed to serve a growing editorial AI platform.

Autoscaling and automatic fault recovery handled varying traffic patterns — including sharp editorial submission peaks — without manual intervention or service disruption, keeping the platform stable across unpredictable demand cycles. Capacity analysis further confirmed that the current A100 deployment can comfortably absorb double Upstage's existing traffic load, providing a strong confidence baseline ahead of the planned Chosun Ilbo expansion.

On the operational side, Upstage is actively migrating workloads from Fireworks to FriendliAI, consolidating their inference stack onto a single platform and reducing the overhead that comes with managing multiple vendors.

| GPU Efficiency | Optimize dedicated vs. autoscaled GPU mix to minimize OPEX |

| TPS Performance | Improve tokens per second via potential H100/H200 upgrade |

| Vendor Consolidation | Migrate all Fireworks workloads to FriendliAI |

| Customer Expansion | Scale to support Chosun Ilbo onboarding |

“Fluctuating traffic is no longer a concern because autoscaling just works.”

Deploy Your Models with FriendliAI

FriendliAI helps enterprise teams and AI organizations turn foundation models into reliable production systems — with optimized inference, flexible deployment options, and the reliability that enterprise workloads demand.

Serve high-performance inference with FriendliAI.

Start Building Faster

Related Customer Stories

Learn how leading companies achieve unmatched performance, scalability, and reliability with FriendliAI

Our custom model API went live in about a day with enterprise-grade monitoring built in.

Rock-solid reliability with ultra-low tail latency.

Scale to trillions of tokens with 50% fewer GPUs, thanks to FriendliAI.

Friendli Engine is an irreplaceable solution for generative AI serving.

Our custom model API went live in about a day with enterprise-grade monitoring built in.

Rock-solid reliability with ultra-low tail latency.

Scale to trillions of tokens with 50% fewer GPUs, thanks to FriendliAI.

Friendli Engine is an irreplaceable solution for generative AI serving.