NextDay AI Cuts LLM Serving Costs by 50% and Boosts Throughput 2–3×

Overview

NextDay AI builds cutting-edge chatbot experiences that feel real, personal, and fun—allowing users to engage with virtual personas ranging from fictional characters to historical icons and celebrity voices.

As their user base and usage scaled, so did the cost of serving large language models. Running multiple high-performance LLMs at massive token volumes strained both infrastructure and budget.

By deploying FriendliAI's Friendli Container, NextDay AI achieved:

2-3x increase in LLM throughput

50% reduction in operational GPU costs

Seamless integration into existing GPU infrastructure

Challenges

NextDay AI's chatbot platform delivers highly personalized interactions tailored to individual users. This deep level of personalization demands:

- High throughput from generative models

- Low latency to ensure natural conversational experiences

- Massive infrastructure power — processing ~3 trillion tokens per month

- Multiple custom and open-source LLMs running concurrently

They initially relied on tens of NVIDIA H100 GPUs to serve requests — but scaling further meant sharply rising costs.

The Solution

NextDay AI adopted Friendli Container, powered by Friendli Inference, to efficiently serve LLMs at scale. Two core technologies made the difference.

Friendli DNN Library

The Friendli DNN Library is a set of highly-optimized GPU kernels designed specifically for generative AI workloads. By enabling advanced quantization formats like FP8 and AWQ, it reduces model memory footprint and improves overall GPU efficiency — delivering faster inference on the same hardware. Where quantization often slows down real throughput, FriendliAI's optimizations turn it into a performance gain.

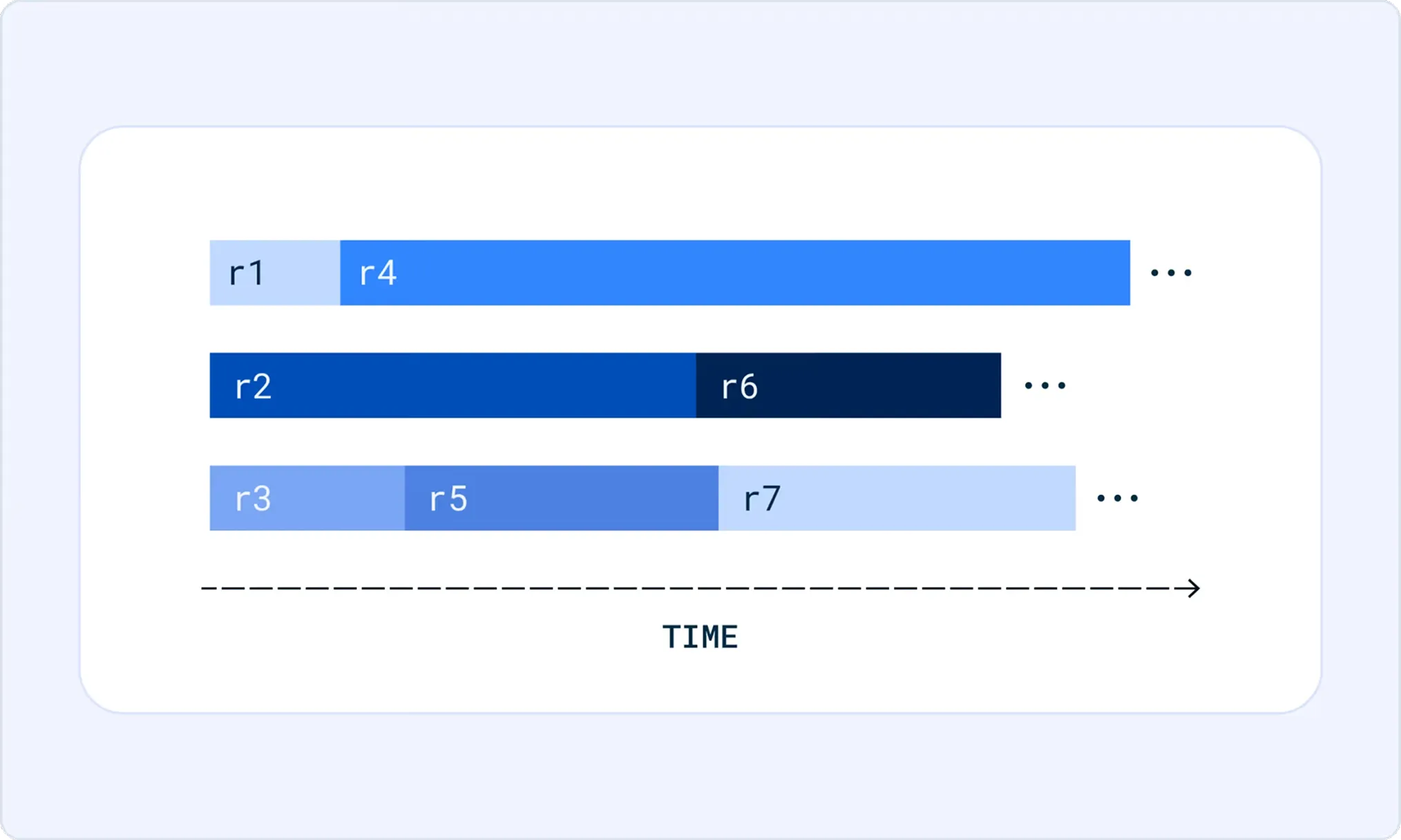

Iteration Batching

Traditional batching methods struggle under varied sequence lengths and concurrent requests. FriendliAI's iteration batching removes this bottleneck by handling multiple simultaneous generation requests more efficiently, increasing usable throughput per GPU while maintaining low latency for interactive use cases. The result is higher real-world throughput without sacrificing responsiveness.

Why This Matters

Today's AI products live and die by performance and cost efficiency. With neon-bright demand for personalized chat experiences, infrastructure decisions directly impact product viability and growth.

Friendli Container enabled NextDay AI to slash GPU costs while scaling throughput — unlocking more capacity without expensive hardware expansion.

Results

Deploying Friendli Container unlocked major improvements:

LLM Throughput | GPU Operational Costs | Integration Effort |

|---|---|---|

| 2-3x increase | 50% reduction | Minimal effort |

By combining efficient quantization with superior batching, NextDay AI now delivers more service capacity with fewer GPUs, saving money while scaling performance.

With Friendli Container, NextDay AI was able to serve more users with the same infrastructure while reducing cost per token served directly improving the economics of running generative AI at scale. High-quality, fast, and personalized interactions were maintained throughout, and with infrastructure complexity off their plate, engineering resources could be redirected toward product innovation.

FriendliAI's solution made inference cheaper, faster, and easier to manage, instantly transforming what it means to serve generative AI at scale.

“Friendli Inference has enabled us to scale our operations cost-efficiently, allowing us to process over trillions of tokens each month with exceptional efficiency while cutting our GPUs by 50%. The performance and cost savings consistently exceed our expectations.”

Deploy Your Models with FriendliAI

FriendliAI helps enterprise teams and AI organizations turn foundation models into reliable production systems — with optimized inference, flexible deployment options, and the reliability that enterprise workloads demand.

Serve high-performance inference with FriendliAI.

Start Building Faster

Related Customer Stories

Learn how leading companies achieve unmatched performance, scalability, and reliability with FriendliAI

Our custom model API went live in about a day with enterprise-grade monitoring built in.

Rock-solid reliability with ultra-low tail latency.

Fluctuating traffic is no longer a concern because autoscaling just works.

Friendli Engine is an irreplaceable solution for generative AI serving.

Our custom model API went live in about a day with enterprise-grade monitoring built in.

Rock-solid reliability with ultra-low tail latency.

Fluctuating traffic is no longer a concern because autoscaling just works.

Friendli Engine is an irreplaceable solution for generative AI serving.