SK Telecom powers enterprise AI agents at scale with FriendliAI

Overview

SK Telecom (SKT) is South Korea's leading telecom operator, renowned for its innovative mobile services, extensive 5G infrastructure, and continued advancements in AI development. As SKT expanded its AI capabilities to serve its massive customer base, the organization needed a production inference platform that could meet the demands of enterprise-grade AI agents with strict SLAs, high reliability, and the ability to handle heavy, unpredictable traffic at scale.

They chose Friendli Dedicated Endpoints as their production inference layer.

Challenges

Building and serving AI agents for a customer base the size of SKT's is an enormous operational challenge.

SKT's team faced:

- Strict SLA requirements with zero tolerance for latency spikes or downtime in customer-facing AI services

- Heavy and variable traffic loads driven by millions of end users interacting with AI agents across mobile and enterprise touchpoints

- High operational costs from inefficient LLM serving infrastructure that struggled to scale economically

- Reliability pressure from production AI workloads that required consistent, predictable performance around the clock

- Deployment complexity that diverted engineering resources away from AI development and toward infrastructure management

Like many large-scale operators, SKT needed a serving solution that could match the ambition of their AI roadmap without becoming an operational burden.

Why FriendliAI

SKT selected FriendliAI for its production-ready inference platform, purpose-built for large language model serving at enterprise scale.

At the heart of the decision was the need for dedicated, isolated inference capacity that could be precisely tailored to SKT's specific workload requirements. Friendli Dedicated Endpoints delivered exactly that high-performance serving with the kind of consistency and reliability that strict SLA environments demand. For a company operating at SKT's scale, even minor latency fluctuations can have significant downstream impact, making consistent low-latency performance a non-negotiable requirement.

Equally important was the platform's ability to handle heavy, concurrent request volumes without degradation. As SKT's AI agent use cases grew in complexity and user demand, FriendliAI's infrastructure absorbed traffic spikes cleanly, ensuring that performance held steady under pressure. Optimized GPU utilization further contributed to significant cost efficiency, eliminating the waste that typically comes with over-provisioning and making the economics of enterprise-scale inference far more predictable.

Perhaps most telling was the speed of the onboarding process. SKT was able to move from evaluation to full production deployment in hours rather than weeks, a turnaround that reflects both the maturity of FriendliAI's platform and the minimal friction of its deployment workflow.

With all of this in place, FriendliAI became the inference backbone connecting SKT's AI agent development directly to production-grade, customer-facing endpoints, bridging the gap between model capability and real-world delivery.

The Solution

SKT deployed its AI agent serving infrastructure on FriendliAI using Dedicated Endpoints, providing the isolation, performance, and control required for enterprise telecom workloads.

FriendliAI's high-throughput inference endpoints were optimized specifically for SKT's LLM serving requirements, delivering stable, low-latency performance even under sustained heavy traffic, a baseline requirement for meeting SKT's strict SLA commitments. Streamlined onboarding eliminated the need for custom infrastructure builds, getting SKT to production quickly and with minimal overhead. The deployment model was also built to scale, giving SKT a repeatable foundation to support its growing AI agent portfolio without starting from scratch each time.

With FriendliAI, SKT transformed its AI agent stack from a high-cost, operationally complex system into a lean, reliable production service.

Results

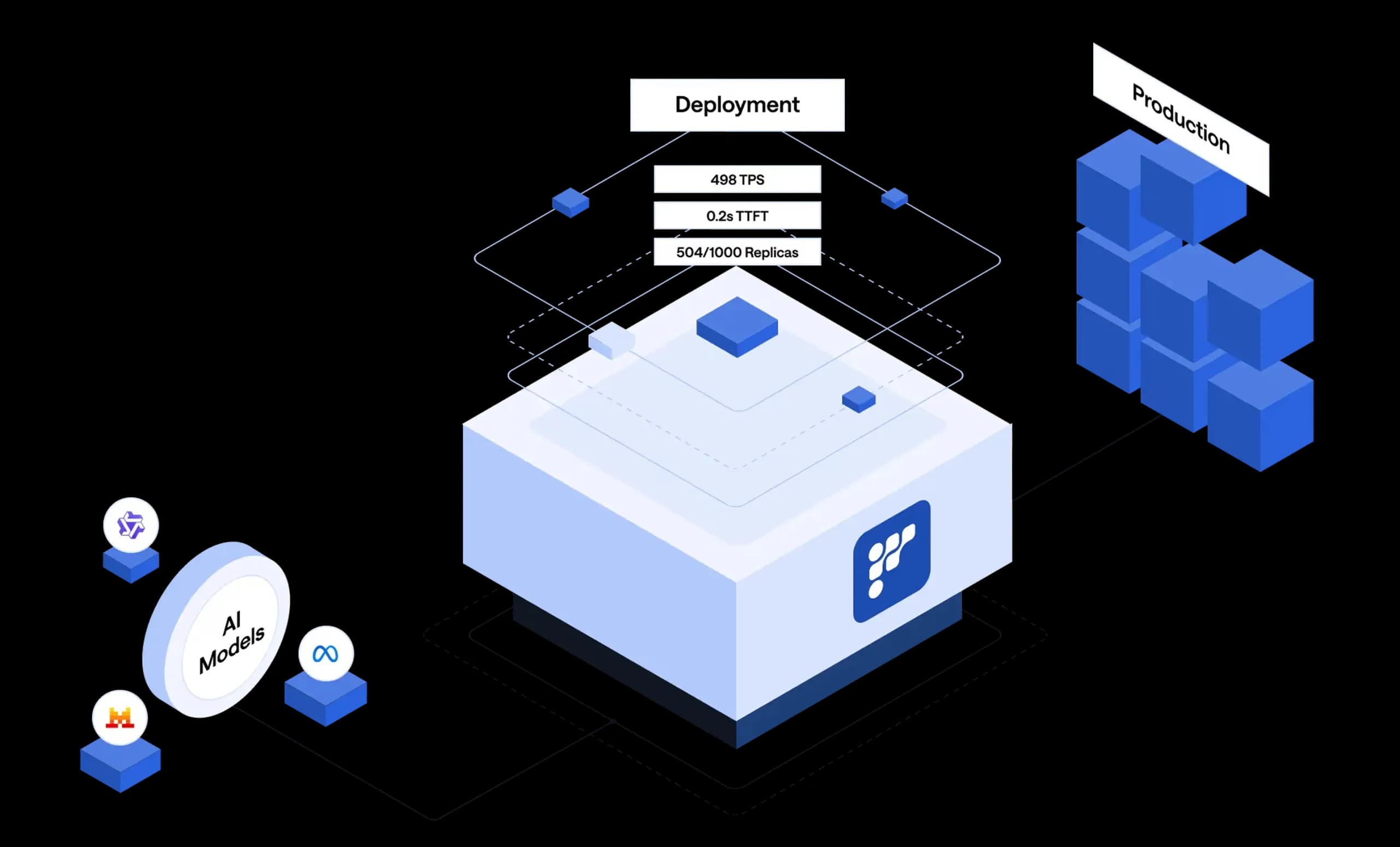

By deploying on FriendliAI Dedicated Endpoints, SK Telecom achieved immediate and measurable impact:

| LLM Throughput Increase | 5x within hours of onboarding |

| Cost Savings | 3x reduction in operational costs |

| Time to Production | Achieved full production readiness within hours |

| SLA Compliance | Exceptional reliability meeting enterprise-grade SLA requirements |

| Traffic Efficiency | Significantly improved handling of heavy, concurrent traffic loads |

FriendliAI enabled SKT's engineering teams to focus on building and improving AI agents rather than managing serving infrastructure, accelerating the pace of AI innovation across the organization.

“Rock-solid reliability with ultra-low tail latency.”

Deploy Your Models with FriendliAI

FriendliAI helps enterprise teams and AI organizations turn foundation models into reliable production systems — with optimized inference, flexible deployment options, and the reliability that enterprise workloads demand.

Serve high-performance inference with FriendliAI.

Start Building Faster

Related Customer Stories

Learn how leading companies achieve unmatched performance, scalability, and reliability with FriendliAI

Our custom model API went live in about a day with enterprise-grade monitoring built in.

Scale to trillions of tokens with 50% fewer GPUs, thanks to FriendliAI.

Fluctuating traffic is no longer a concern because autoscaling just works.

Friendli Engine is an irreplaceable solution for generative AI serving.

Our custom model API went live in about a day with enterprise-grade monitoring built in.

Scale to trillions of tokens with 50% fewer GPUs, thanks to FriendliAI.

Fluctuating traffic is no longer a concern because autoscaling just works.

Friendli Engine is an irreplaceable solution for generative AI serving.